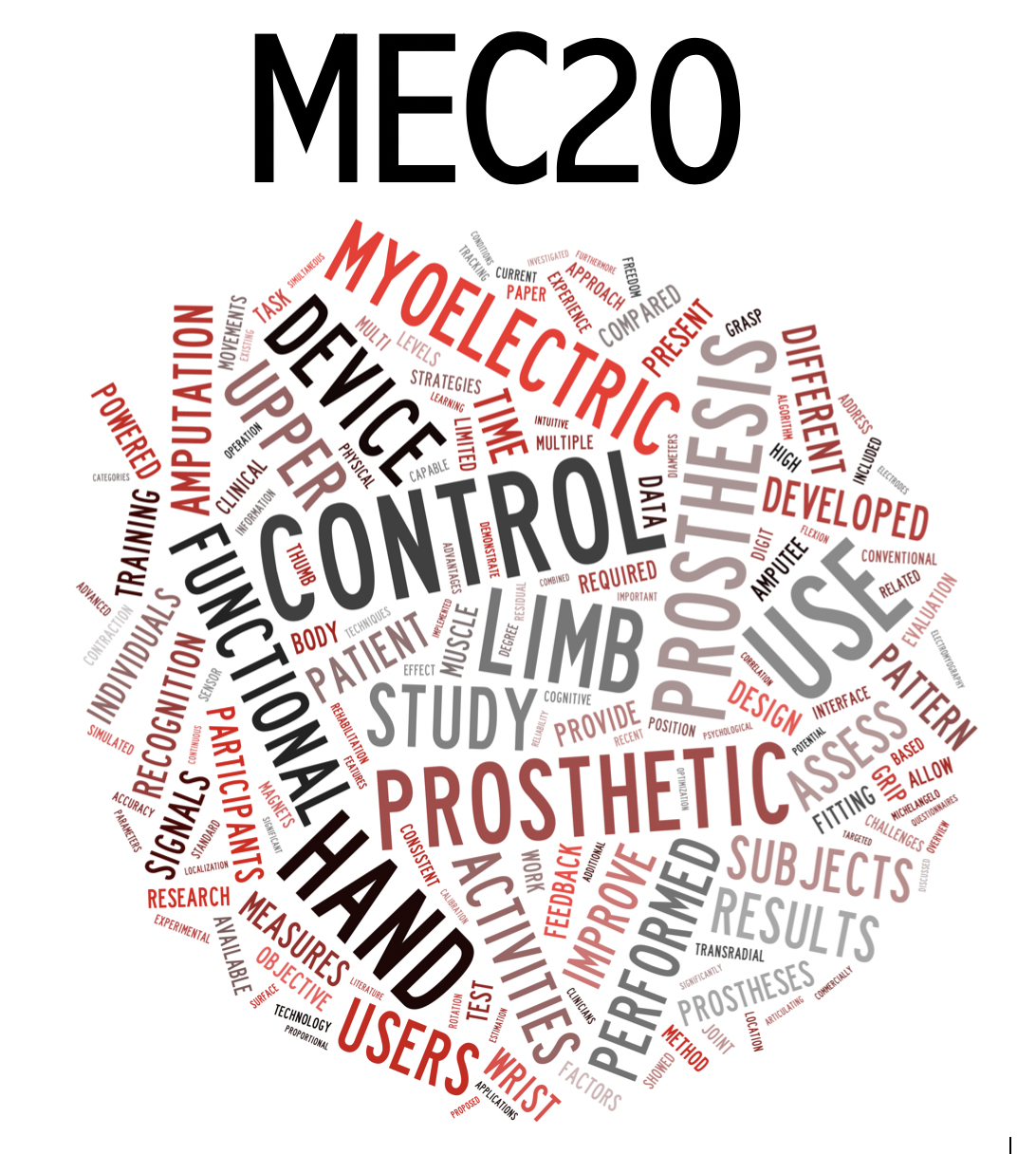

An Algorithm Calibrated with Categorically Labelled EMG for End-to-End Estimation of Continuous Hand Kinematics

DOI:

https://doi.org/10.57922/mec.39Abstract

To restore limb functionality, control of a prosthetic hand should ideally be (I) proportional, i.e. produce speeds which varies in conjunction with changes in the latent intensity of muscle contractions, and (II) simultaneous, i.e. allow for both combined and independent steering of relevant kinematic degrees of freedom (DoFs). These desiderata are not straightforwardly attainable with classificatory pattern recognition applied to surface electromyography (sEMG), which only allows for the detection of a finite set of categorically encoded gestures. To alleviate such limitations, we here introduce a related approach for myocontrol which maps sEMG envelopes directly to multiple, continuously encoded DoFs, providing proportionality and simultaneity implicitly. The proposed method, termed myoelectric representation learning (MRL), is constituted by a deep learning topology and a domain-informed model training scheme. As with conventional pattern recognition, MRL operates on sEMG exclusively and is calibrated without ground truth limb kinetics, allowing for deployment with amputee users. We demonstrate the practical viability of MRL by implementing a virtual control interface driven by a setup consisting of 8 surface electrodes and capable of decoding 2 kinematic DoFs in real-time. Experiments with 10 healthy subjects, in which the interface was used to conduct tests yielding 5 numeric performance metrics, were performed to quantify the quality of myoelectric control afforded by MRL. Comparisons with the performance obtained from of a Linear Discriminant Analysis benchmark method on an identical test revealed that MRL outperforms the former in all computed measures of control efficacy.